An omni-channel experience with StableNet®

Feb 7, 2018 | Blog, Network Management

Now that the New Year’s Eve’s fireworks have died out and all the “best of 2017” shows are over, it is time to talk about what happened behind the scenes. The highlights of 2017 for Infosim® are probably already known to you. Therefore, I would like to talk about the things we haven’t talked about yet.

Next to making StableNet® even better, we are working towards an omni-channel experience for our Unified Network and Management Solution. For those who haven’t encountered this buzzword yet, it is simply to say that one can get the experience from every possible channel. Obviously, new channels are popping very fast these days. We have focused on integrating three main channels that have recently gained particular relevance.

In 2017, we have released a new version of the iPhone app with increased options for alarm handling and mobile dashboards, as well as several usability improvements. With the StableNet® App, you have access to the StableNet® key features on your smartphone and tablet.

With the release of the app, we have also released our first Apple Watch app. Always wanted to have your StableNet® alarm overview on your watch face and check and acknowledge alarms without even taking your phone out of your pocket? This has now become reality.

What else comes to my mind? There is a channel that gained a lot of popularity in 2017: voice! More and more people are using Siri, Google Voice, Cortana, and so on to communicate to different services. We decided to integrate an Echo device from Amazon with StableNet®. The Echo device communicates with the REST API of StableNet® to retrieve information about the network and services. A video says more than words, so see a short demo below.

For some of our customers, public cloud technologies with data being sent or hosted outside company borders are strictly prohibited. In order to also allow voice recognition for those parties, we are looking into Mycroft as a private cloud alternative to Amazon Echo.

Mycroft is open source and can be run completely locally. A regular installation of Mycroft uses an online Speech-To-Text (STT) just like the Amazon Echo and has similar quality. However, with one of our customers we are working on a completely local demo. As expected, using a local STT server will not give as good results as using one that is shared among many users. This is, for the time being, the one drawback of being able to run voice recognition in a private cloud environment. However, we think that even with these limitations the benefits clearly overweigh. We have tested a local installation of Mycroft, using a local STT in a docker container. Any one-word command is understood perfectly, but for sentences one has to speak a little slower than normal.

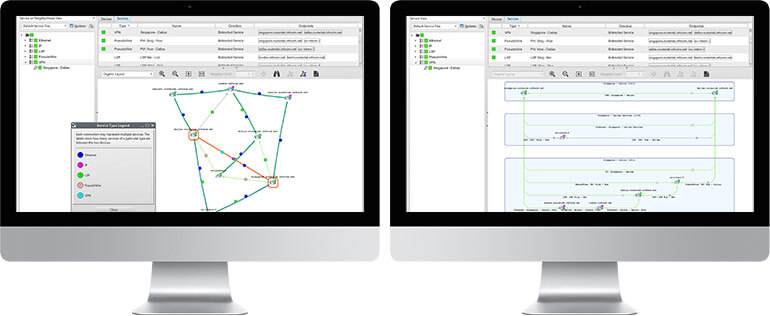

I would like to end this blog with one last channel: augmented reality. Within StableNet® 8, we have introduced a new service analyzer, with which it is possible to create hierarchical service views.

Now what happens if the topmost service in this image is not just a one-to-one service, but for example a VPLS with many endpoints. A 2D visualization of the hierarchy of such a VPLS service will be difficult to understand. That is why we are experimenting with virtual and augmented reality. This is of course not only a question of implementation, but also a discovery of how to make use of the third dimension. Therefore, this project will continue this year.

Stay tuned for the next channel-demo movie!

Marius Heuler

CTO at Infosim®

Author

Author

Marius Heuler

CTO at Infosim®

Software

Made in Germany